Tabla de contenidos

Query fan-out is a technique used by AI-based search engines that involves expanding a single user query into multiple related sub-queries to cover various aspects or search intents implicit in the original question.

Instead of searching exactly for what the user wrote, the engine expands that query into several more specific queries.

This is especially useful when a search is complex or ambiguous, as it allows for encompassing different search intents and obtaining results that might otherwise have been missed.

This diversity of results translates into an increased probability of finding reliable and relevant information. Furthermore, it reduces the risk of incomplete answers or falling into typical AI hallucinations.

For its part, Google describes its approach to query fan-out as launching multiple parallel searches across various sub-topics and different data sources to craft the answer. This allows it to show a broader and more diverse set of useful links compared to a classic search.

The current search paradigm is changing rapidly. People are now formulating longer and more complex queries—Google, for example, mentions questions that are nearly three times longer than in traditional search—which is precisely where this technique reaches its full potential. This is why we can sense its importance in today’s search engines.

Both AI Overviews, which arrived in Spain in March 2025, and AI Mode, which launched in October 2025, can use query fan-out to power their responses. However, it is in AI Mode where, due to its more conversational nature, it is typically applied more extensively to build more developed answers.

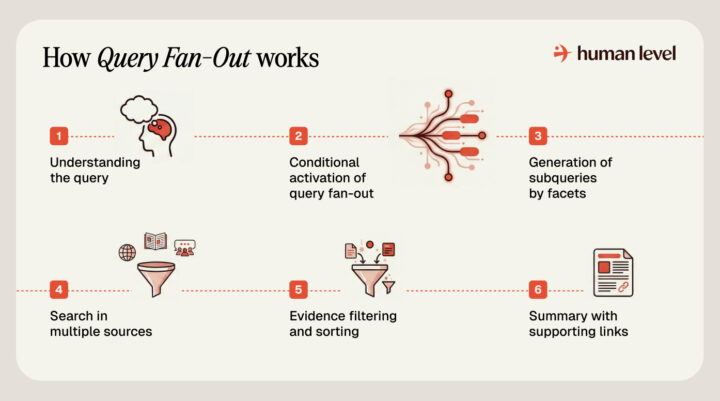

How does query fan-out work in search?

In AI Mode, and generally in any AI search engine, the engine does not follow the classic “one query, one list of links” scheme. If you already know what RAG is, query fan-out is basically a RAG that applies some advanced techniques.

Let’s look at what a search process in AI Mode would look like starting from a question:

- Query understanding. First, it tries to understand exactly what you need. It analyzes the intent of your question, its complexity, and the context.

- Conditional activation of query fan-out. If the query is direct and closed, a traditional search is usually sufficient. But if the request is broad, open, or has several nuances, the system activates query fan-out and breaks your question down into more specific lines of investigation.

- Generation of sub-queries by facets. The decomposition creates sub-queries that cover the various facets of the original intent. But take note, the engine does not limit itself simply to synonyms; it explores key definitions, decision criteria, comparisons, causes and possible solutions if there is a problem, recent facts and figures, expert opinions, practical steps to follow, etc.

- Multi-source searching. For each facet, it formulates a specific sub-query and looks for signals in various sources: the web index, Knowledge Graph, specialized verticals, news, or even forums, depending on the topic and what proves most useful.

- Filtering and ordering of evidence. It retrieves evidence per sub-query and ranks it by relevance, filtering out redundancies and noise to prioritize what best answers the search intent.

- Synthesis with supporting links. With the refined material, the AI composes a coherent response: a summary if you are looking for a quick overview or a more developed explanation if the query requires it, always with links to expand on the original sources.

As we can see, this approach allows for a more natural conversational mode. When you ask a second question or add a criterion, the system re-evaluates the intent with the context of the thread and re-opens the search, generating new, more specific sub-queries and discarding those that no longer apply.

In this way, query fan-out is not a single step, but an iterative cycle. It activates, retrieves sources, synthesizes an answer, and upon receiving further clarifications, executes the process again until the information aligns with what you truly need.

Let’s look at an example:

Imagine you ask: “I need a laptop for remote work and some photo editing, 14 or 15-inch screen, that lasts through the workday, and with a tight budget.”

A system with query fan-out would open sub-queries by facets such as:

- Performance: which processors and how much RAM provide fluidity in multitasking and light editing.

- Real battery life: independent tests with 8–10 hours of use.

- Screen: panel type, recommended minimum brightness.

- Portability: weight and thickness for carrying to meetings.

- Connectivity: USB-C with charging, HDMI, card reader.

- Keyboard and Spanish layout.

- Noise and temperatures.

- Warranty and technical service in Spain.

- Verified reviews.

- Comparisons within its price range.

It might even consider recent deals or the alternative of refurbished equipment if they improve the value for money.

With that evidence, the system would return a clear summary of what to look at first, a realistic price range, two or three equipment profiles according to your case, etc. And all of this accompanied by links to expand on each point and verify the details that matter most to you.

All this process happens hidden from the user.

You see a single response with references, but behind the scenes, the system has launched dozens of sub-searches in parallel and consolidated only what is relevant.

That ability to ask many questions at once, organize the answers, and present them in a comprehensible manner is what mainly differentiates AI Mode from traditional search and explains why its results are often more complete.

Advantages and benefits of query fan-out

The query fan-out technique brings multiple advantages both for users and for the overall quality of AI search.

Let’s look at some advantages and benefits of this technique:

More complete and contextualized responses

By covering different facets of the query, the response generated by the AI is much richer.

Instead of receiving a single piece of data or having to manually refine the search, the user directly obtains a global overview of the topic with the relevant information already compiled. This is especially useful for complex questions, comparisons, or decisions where many criteria exist.

Coverage of different user intents

Often, a user’s question implies several intents at once.

Query fan-out ensures that none go unanswered by exploring all those potential intents. Thus, it minimizes the possibility of omitting information important to the user.

Even when the original query is ambiguous, the sub-queries cover possible interpretations, increasing the relevance of the final response.

Reduction of errors and hallucinations

One of the greatest risks of generative AIs is that they can provide incorrect or invented data—the common hallucinations.

By basing the response on multiple real searches and verified sources, the AI can more easily detect and filter out doubtful information. Furthermore, cross-referencing data from several sources acts as a form of verification that minimizes these problems.

In practice, Google has indicated that the diversity of sources obtained through query fan-out improves the reliability of answers, reducing the risk of errors. That said, it still does not avoid them entirely.

Content discovery and new opportunities for websites

While one might think that an AI-generated response takes attention away from web pages, Google emphasizes that these features are designed to drive content exploration on the internet, not to replace it.

Thanks to query fan-out, AI Mode can show a wider range of links to relevant pages that might not appear in the top results of a traditional search.

In fact, Google points out that this approach helps people visit a greater diversity of websites to delve deeper into the topics of their query, gaining qualified traffic from the highlighted links in the generative response.

Implications for SEO

The arrival of query fan-out in AI search represents a paradigm shift for SEO which, like any change, requires adaptation.

SEO is evolving toward a kind of AEO (Answer Engine Optimization), where we no longer just optimize for the search engine to show us in a ranking, but for the artificial intelligence to choose us as one of the sources when constructing an answer.

Classic SEO recommendations (useful content, good structure, structured data, web performance, etc.) remain valid, but now we must think more about topics than keywords.

Let’s briefly look at some considerations and tips:

Optimize for intents, not just keywords

AI now breaks queries down into multiple related questions, so it is no longer enough to rank a page for a specific keyword as was the case until now.

It is crucial to understand the full background of user searches and create content that answers, as far as possible, all the sub-questions someone might have about a topic.

In essence, it’s about anticipating potential follow-up questions or related doubts the user would have and covering them naturally in our content.

Build Topical Authority

AI engines tend to prefer sources that demonstrate authority and exhaustiveness on a topic. This was already true in traditional search, but even more so now.

This means your content strategy should aim to cover a topic in depth, addressing all relevant facets instead of isolated, disconnected pages, as many sites have done until now.

It is advisable to structure the site into topic clusters: a pillar page on the general topic and secondary pages that develop each sub-topic or facet, all semantically interconnected.

This not only helps the user navigate your content but also shows Google that your site offers comprehensive coverage of the subject, increasing the chances that some of those pages will be chosen to answer query fan-out sub-queries.

“Answering facets” mindset

This is related to the previous point. When creating content, think about each specific question you could answer within a broad topic.

If, for example, you have a main article about traveling to Colombia, you could include additional sections or articles dedicated to climate by season, local food, transportation, safety, etc.

The more useful facets you cover clearly, the more options you’ll have to be selected as a source.

In short, enrich your content with answers to related questions the user hasn’t even asked yet, but probably will.

AI-friendly structure and format

If we want to appear as a source, we have to make it easy for the AI. To do this, the key is structuring your content clearly: use descriptive headings, concise paragraphs, lists, and tables.

AIs extract snippets of text to provide answers (what Google calls extractive responses), so it is fundamental that on your pages, the answers to potential questions stand out easily.

A well-organized format helps the AI analyze and summarize your content correctly.

Furthermore, accompany the content with structured data (remember they should be consistent with what appears on your page). This is nothing new, as they are also very important in traditional search and must be maintained. In the case of AI search engines, they represent a technical advantage that helps reduce errors.

Think that, in a way, you are writing for the user and for the AI at the same time. The content must be reader-friendly for the human, but also clear for a machine trying to understand and reuse it.

Emphasis on quality, reliability, and EEAT

Since AI Mode prioritizes information from trustworthy sources to avoid misinforming the user (or at least to try), EEAT is fundamental. Having strong signals of experience, expertise, authoritativeness, and trust is now more important than ever, if possible.

The usual recommendations in this regard remain the same: have well-documented, precise, and up-to-date content; show authorship with credentials or experience in the topic; cite sources where appropriate, etc. All of these are actions that will help in this regard.

Having content with original data, proprietary research, or different perspectives will also provide added value that the AI won’t find elsewhere.

The summary is simple: if we want to appear in AI Mode, we have to work on building a solid reputation.