Tabla de contenidos

Large language models give us false or erroneous answers with a baffling level of conviction. If you use AI tools to research, generate content, or make decisions, this behavior poses a real reliability problem. A recent study published in arXiv identifies for the first time the artificial neurons responsible for this phenomenon and demonstrates that it is possible to mitigate it surgically.

Why models hallucinate

Any AI architecture that relies on neural networks, such as the transformers used by LLMs, will always be subject to an error rate. Scaling a model (more networks and training data) reduces certain types of errors, but it does not eliminate hallucinations. The Omniscience benchmark by Artificial Analysis illustrates this clearly: even the largest models on the market continue to generate incorrect responses with high apparent confidence.

Hallucinations are due to several factors:

- The quality or lack of training data for certain questions.

- Models are trained to predict the most probable next word, not the most truthful one. The most repeated response in the training data usually coincides with the correct one, but not always. Recall Einstein’s case, when a hundred scientists wanted to refute his theory of relativity, to which he replied: “Why a hundred? If I were wrong, one would be enough.”

- During the supervised fine-tuning stage (SFT), the model is trained with human dialogues to follow instructions. If in those dialogues people were too sycophantic or avoided acknowledging they didn’t know something, the model will imitate those behaviors.

- RLHF (Reinforcement Learning from Human Feedback), a fine-tuning of the model’s parameters during post-training, introduces a similar distortion: human reviewers tend to validate answers that seem correct over honest answers that admit uncertainty.

The result is a model that prefers to confabulate rather than say “I don’t know.”

Neuroscience applied to the AI brain

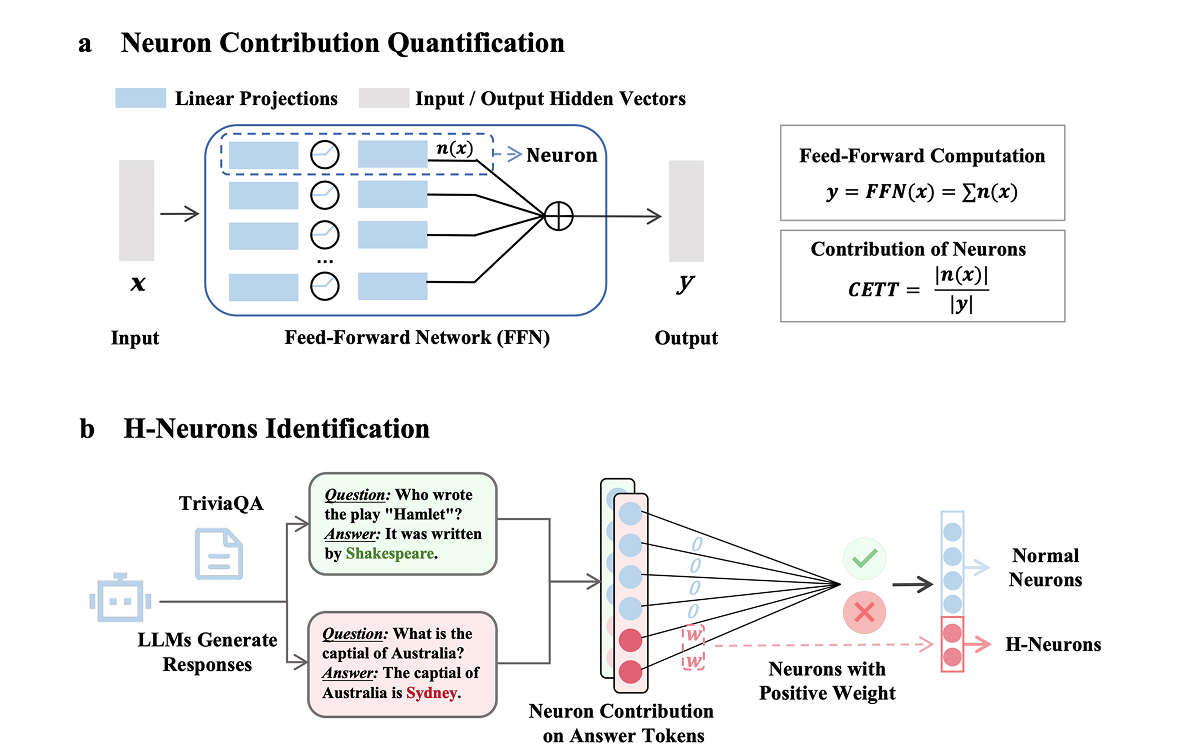

The study H-Neurons: On the Existence, Impact, and Origin of Hallucination-Associated Neurons in LLMs addresses the problem from a different angle: instead of intervening on the data or the training process, researchers dissect the already trained model to identify which artificial neurons are directly responsible for hallucinations.

To do this, they set the model to maximum creativity and repeatedly ask it the same question for which they know the answer and to which the model always responds incorrectly. In this analysis, the CETT (Causal Effect on Token Trajectory) metric is obtained from each neuron, which measures the contribution of each neuron to the model’s internal state between layers of neurons. Using an analogy, it would be like knowing which neurons contribute most at each step of your subconscious thoughts before reaching an answer. Then, the CETT value information of all the neurons in the model is passed to a linear classifier, which is a type of AI algorithm that, in this case, separates the “good” neurons from the ones that hallucinate.

By testing different questions, they discovered that the H-Neurons (as they call the hallucinatory neurons) are the same regardless of the question asked.

A minuscule proportion with a disproportionate effect

The number of H-Neurons is incredibly small, approximately only 1 in every 100,000. Thus, out of the more than 100 billion parameters that large language models currently have, it is only necessary to adjust a few to achieve better responses.

The experiment also revealed an unforeseen connection: reducing the weights or parameters of these neurons (equivalent to reducing the intensity of synapses between neurons in a biological brain) not only decreases hallucinations but also makes the model less sycophantic. Conversely, when those weights are increased to the maximum, hallucinations become more frequent and the model’s tendency to give the answer the user seems to want to hear increases, even if it is incorrect. Therefore, hallucinations and model sycophancy are intrinsically linked.

The study adds a relevant implication for safety: more sycophantic models are also more susceptible to bypassing their content restrictions. A model that prioritizes user satisfaction over truthfulness is, structurally, more manipulable.

Despite the discovery, these hallucinatory neurons—and with them, all hallucinations—cannot be completely eliminated because they are interrelated with language understanding. Therefore, removing them would degrade the model’s ability to connect words. Their effect can be considerably mitigated, but we must remember that the other factors mentioned at the beginning of the article could still cause hallucinations.

The hallucination-correcting effect of this new technique is greater when the model is small, where there are fewer parameters and fewer neurons, meaning the proportion of H-Neurons is higher.

What changes—and what doesn’t—for professionals using AI

This study does not resolve the problem of hallucinations in its entirety. Structural causes such as the quality of training data, optimization for probability instead of truthfulness, and RLHF biases remain intact. What it provides is a precise intervention lever on the already trained model, opening the possibility that future models incorporate this adjustment by default and, therefore, become more reliable.

Even so, no one knows if the problem will be fully resolved in the future. For that to happen, it will likely be necessary to radically change the way these models are trained or built. Whatever comes next, we will keep you informed on our blog.