Tabla de contenidos

What is indexability?

The indexability of a website is the ease with which search engines can find it, crawl its contents and index it. A website is indexable when it is linked, its content is accessible to robots and it is easy to classify in the relevant search categories.

Phases of website indexing

Tracking

Search engines rely primarily on what is called a crawler, crawler or robot. In the case of Google, this robot is called Googlebot. It is a kind of browser that roams the Web trying to discover and crawl as much content as possible.

Search engine robots mainly use links to discover new pages, so if they find a link pointing to a page of a website, they will then use all the links available on that page to crawl the rest of the pages that make up the site.

Although links are the primary way search engines discover new content, website managers can also provide search engines with a complete “listing” of the pages they wish to index through a sitemap file. This is a file in XML format in which we can include the pages we want the robots to index.

In general, search engine robots try to crawl all the pages they find linked, so if we do not want them to crawl a specific page, we must expressly tell them so. To do this we can use specific instructions in a special file called robots.txt (in which we include sections of our Web that we do not want robots to crawl) or through a meta tag called robots, in which we can prohibit robots from indexing a particular content.

When robots discover a new page, they store a version of it in a temporary memory of the search engine called cache. The page is identified in the search engine’s file system by a unique identifier: its URL address. You can check the version of any page in the browser cache by simply prefixing the URL with “cache:”. For example: “cache:http://www.elmundo.es/”.

Search engine robots frequently visit websites to keep their results updated and to discover new content and more pages. These robots dedicate a limited amount of time to this task, so if your server is slow or finds erroneous content, non-existent pages due to broken links or duplicate content, you will be wasting a large part of the time that the search engine could dedicate to crawl your website (crawl-budget).

Here are some recommendations that will make it easier for search engines to crawl your website:

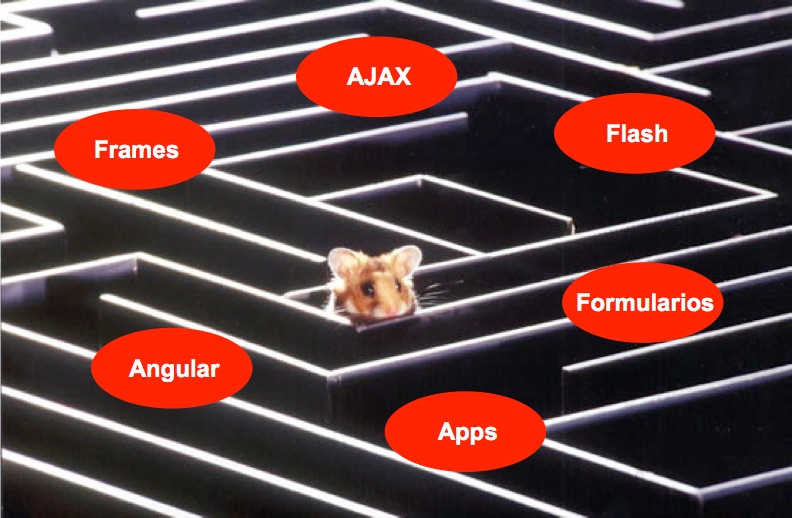

- Use standard HTML code in your pages: avoid content managers that make intensive use of JavaScript functionality as search engines may not execute this type of programming in a predictable and consistent manner.

- Make sure that each content has a unique URL: i.e. no two different pages can be visited with the same URL or two identical pages can be accessed from two different URLs. Each content must have a unique URL.

- Include a menu from which you link to the most important pages and content sections of your website: it is not advisable for the menu to include more than one level of drop-down links. And it is to link directly to both product families and subfamilies if their importance makes it advisable to do so.

- If your website uses a search engine for navigation (such as on a real estate or travel website) In preference to menu links, be sure to display a directory that points to the results pages you want search engines to be able to crawl: search engines cannot use search forms to discover, for example, your “apartments in Guadalajara” or “hotels in the Canary Islands” page if they do not find a link pointing to those listing pages.

- Your most profitable products, most demanded services or most read contents should be less clicks away from the home page: search engines crawl more frequently the pages that are in the first levels of navigation. For example, pages linked from the menu or from the home page itself. Make sure that your most important pages are close to the home page and have proportionally more internal links than the other pages.

- Include focused, unique and rich text content: preferably not appearing on other websites but also not appearing on other pages of your own site.

- Provide supplemental text content for content that is not directly crawlable, such as images or videos: through the alt attribute of image tags or caption text and excerpts in videos.

- Make sure that your server responds quickly to page download requests and does not experience frequent crashes: This will make it easier for search engines to crawl more pages each time they visit and will not register your website as a low-quality site for finding a high number of server errors.

Although these are some of the key points, there are many others that we can optimize to achieve more frequent and effective tracking. The section focused on indexability issues in an SEO audit focuses on detecting possible problems or obstacles that search engines may encounter when crawling a website. After establishing a diagnosis, the appropriate recommendations will be made regarding content, programming or the server itself to ensure that search engines can optimally access all the content that we consider important for our target audience.

Classification or analysis

After crawling the website, the next step search engines take is to analyze each piece of content to classify it into relevant or related search categories. That is, the search engine must answer the question, “For what searches might the content on this page be a useful result?”

As it is easy to guess, for each new content that search engines find and crawl, there are already other similar contents previously indexed that share ranking with the newly discovered ones. The search engine must then decide whether the crawled content should be added to its index and in what order it should appear in relation to other content in the same search category.

To establish the relevant search categories for a given content, search engines analyze all the text they find on the page and pay particular attention to those words and phrases that are included as titles, headings, subsections, bold text, anchor text. These are what we call prominent areas due to the fact that the presence of certain words in them “weighs” more heavily on the ranking and ordering of the page in the results than if the same words simply appear in a paragraph of text.

Indexing itself

Generally, search engines will add all discovered pages of a website to their index and rank each page according to the searches for which they consider it relevant. But there are some cases where the search engine may decide not to index a page that it did crawl:

- If a search engine detects that many pages of the same domain are very similar to each other, it may decide not to index all of them but only those it considers most important. This situation in what we call duplicate content and it is important to prevent it from occurring as much as possible.

- If a search engine detects that many of the crawled pages have little or no content and that the content is disproportionately low in relation to the advertising, the search engine may detect these pages as thin content so it will not index them either.

It is important to know the actual number of pages of your website in order to compare it with the number of pages that search engines have indexed. The relationship between these two values is called saturation and, of course, we want it to be as close to 100% as possible.

We can get an approximate idea of the pages that a search engine has indexed on a given website by doing a search with the expression “site:domain.ext” in Google. For example, if we search for “site:elmundo.es” Google tells us that this domain has approximately 815,000 indexed pages.

If we are the managers of a website, we can authenticate ourselves as such in the Google Search Console tools from where we can access much more precise data on the crawling, classification and indexing of our site.

Additional references